Some checks failed

Build blog docker image / Build-Blog-Image (push) Failing after 14s

Signed-off-by: jackfiled <xcrenchangjun@outlook.com> Reviewed-on: #21

2.2 KiB

2.2 KiB

title, date, tags

| title | date | tags | ||

|---|---|---|---|---|

| High Performance Computing 25 SP Heterogeneous Computing | 2025-05-10T00:36:20.5391570+08:00 |

|

Heterogeneous Computing is on the way!

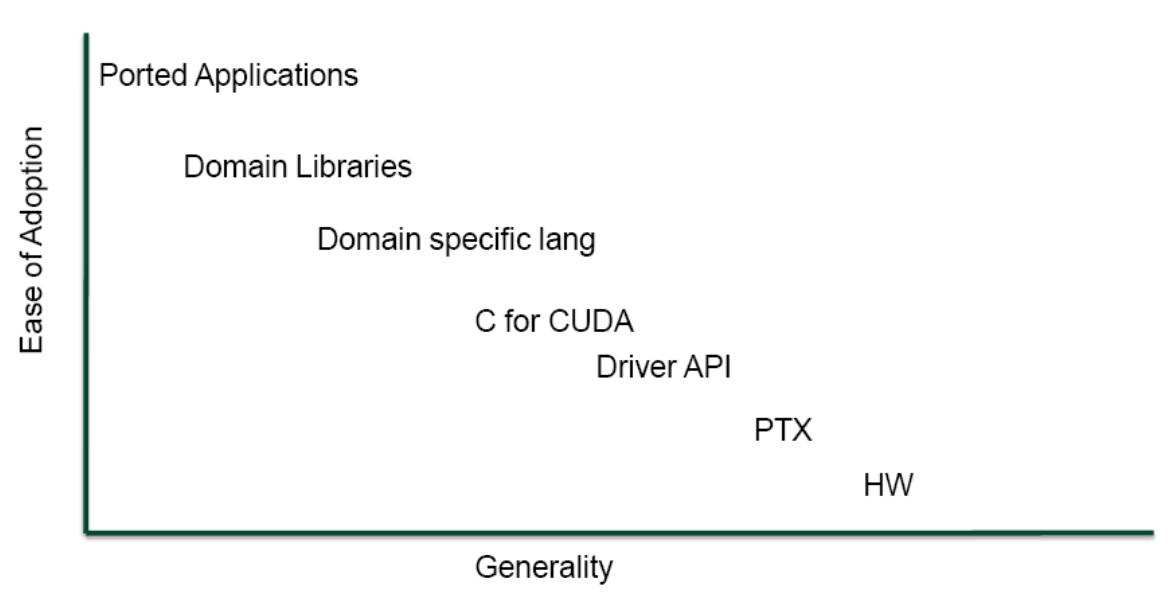

GPU Computing Ecosystem

CUDA: NVIDIA's Architecture for GPU computing.

Internal Buses

HyperTransport:

Primarily a low latency direct chip to chip interconnect, supports mapping to board to board interconnect such as PCIe.

PCI Expression

Switched and point-to-point connection.

NVLink

OpenCAPI

Heterogeneous computing was in the professional world mostly limited to HPC, in the consumer world is a "nice to have".

But OpenCAPI is absorbed by CXL.

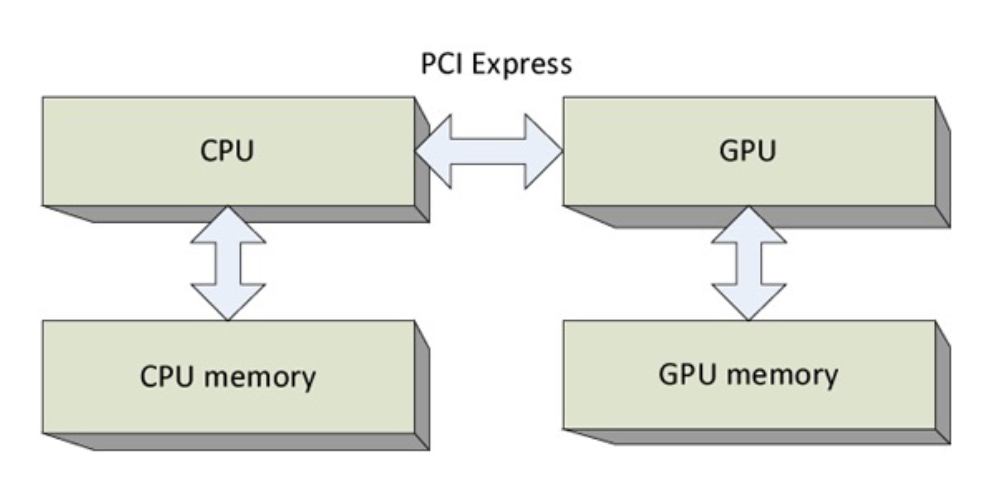

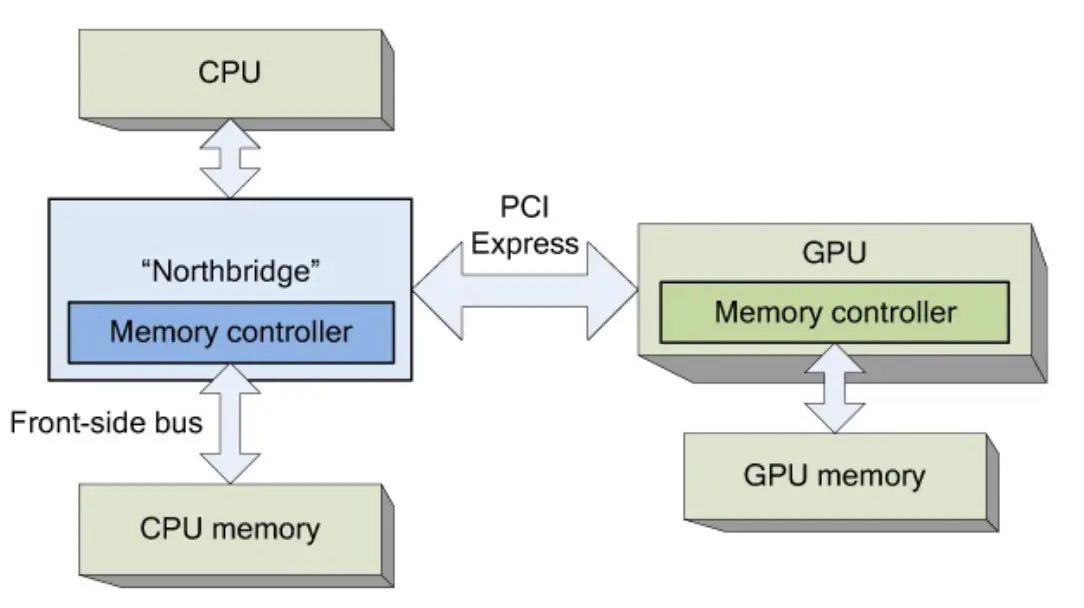

CPU-GPU Arrangement

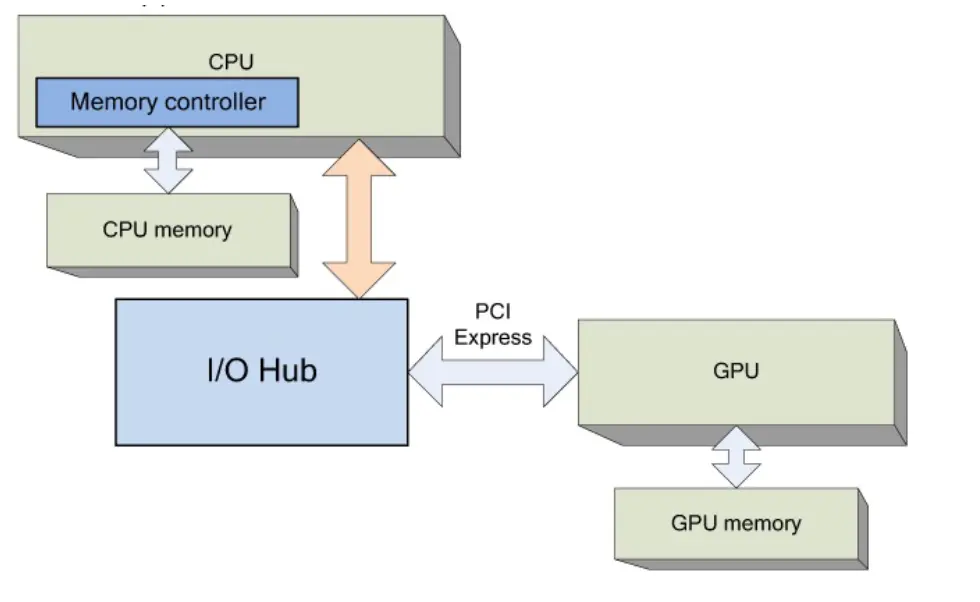

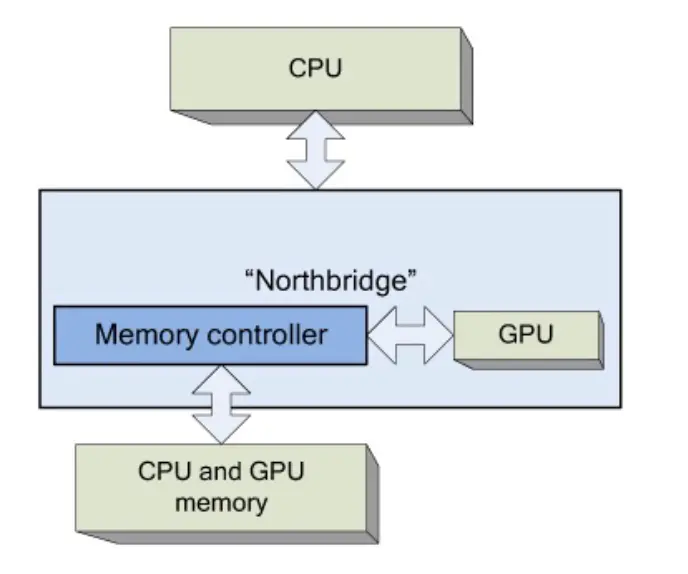

First Stage: Intel Northbrige

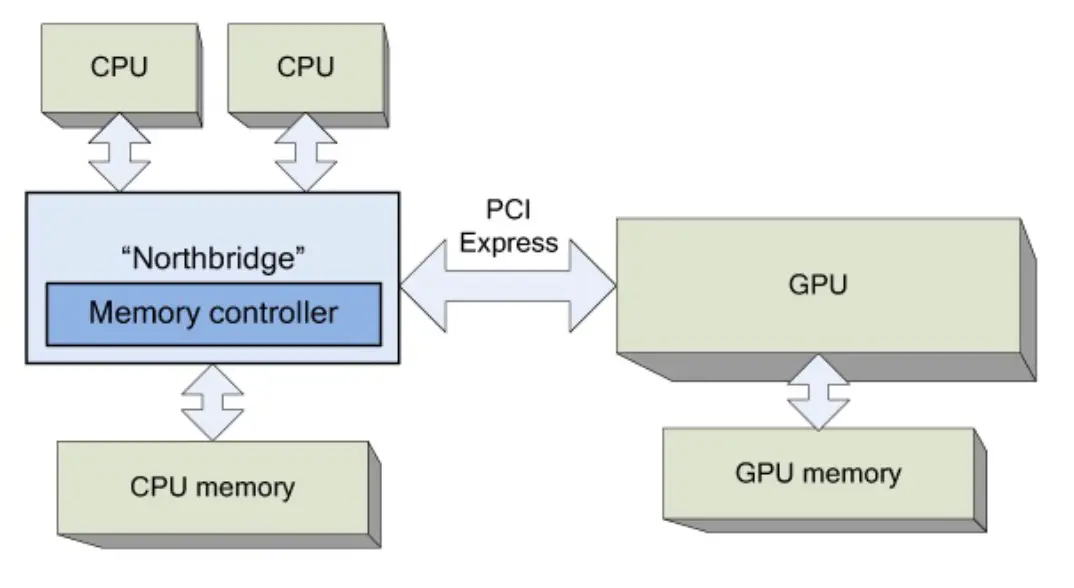

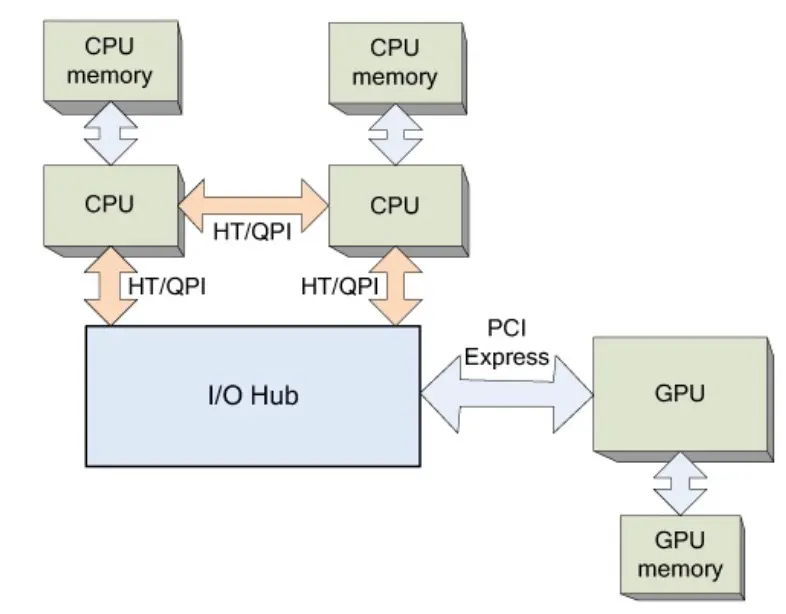

Second Stage: Symmetric Multiprocessors:

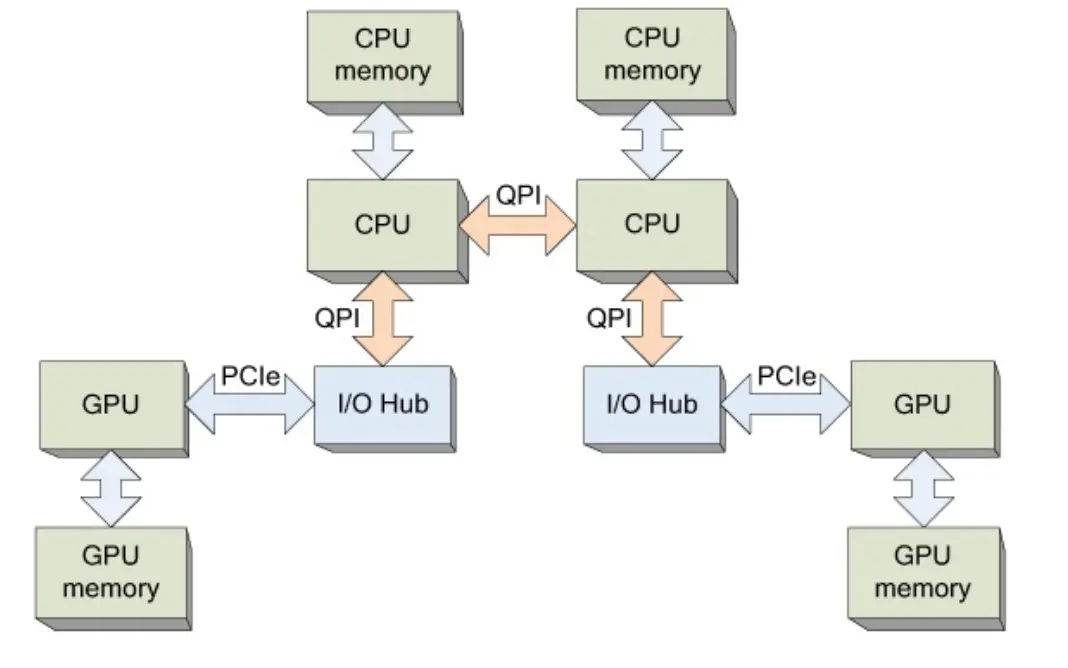

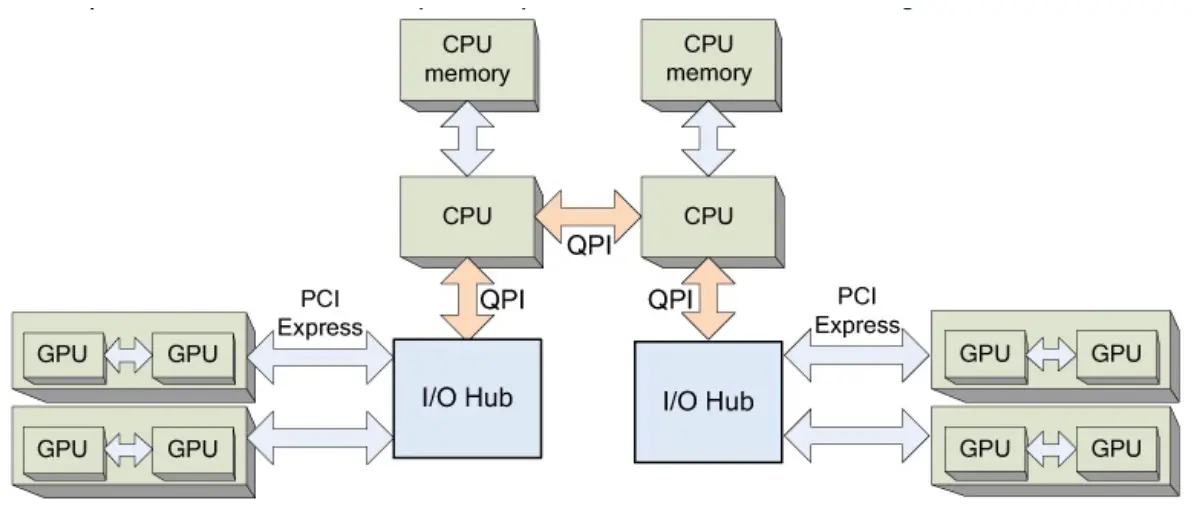

Third Stage: Nonuniform Memory Access

And the memory controller is integrated directly in the CPU.

So in such context, the multiple CPUs is called NUMA:

And so there can be multi GPUs:

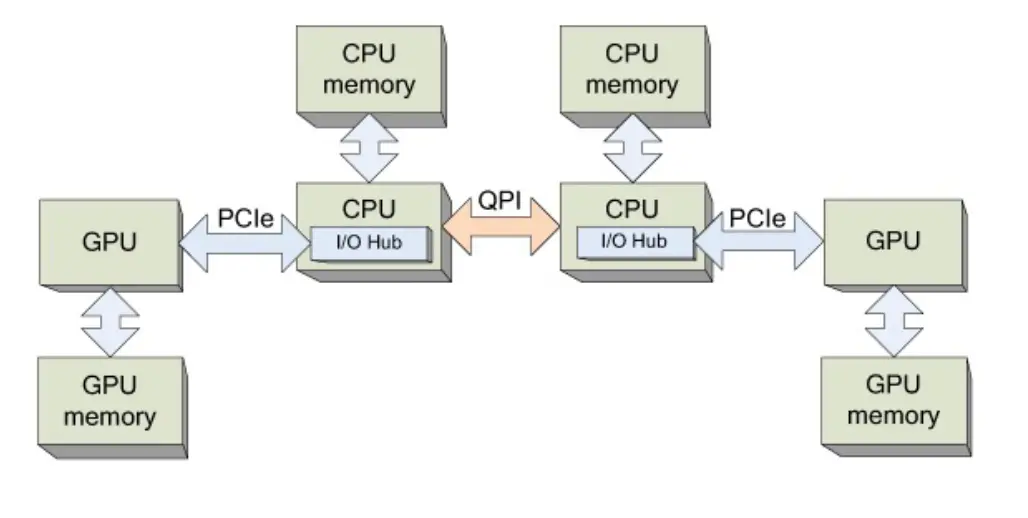

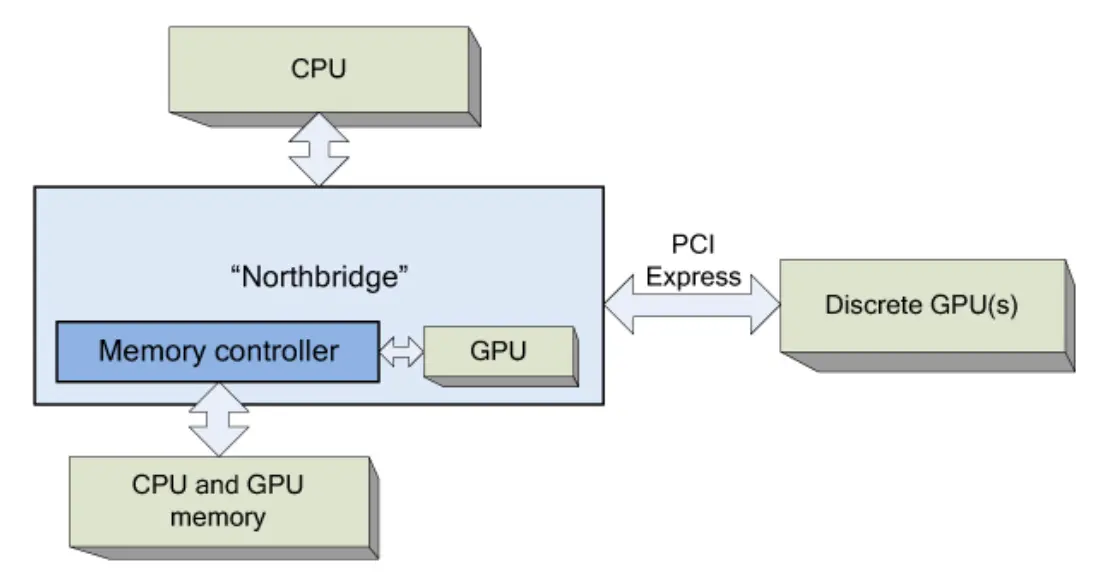

Fourth Stage: Integrated PCIe in CPU

And there is such team integrated CPU, which integrated a GPU into the CPU chipset.

And the integrated GPU can work with discrete GPUs: